When developing, quite frequently we run into the situation where we want to delay an action a little bit, perhaps for half a second. If your software is not highly concurrent, this is as easy as a call to one of the more granular sleep() calls now available.

But what if you have a user facing 1000 DNS queries per second, wanting to delay answering all of them by a few hundred milliseconds? We can't spawn 1000 threads per second and just run nanosleep() on them!

This problem is all the more vexing because many end-users find it very easy to say the words 'just delay the answers a bit!', without realizing that this is in fact not an easy thing to do at high concurrency.

This week, we saw Pavel Odintsov run into this exact issue, nameservers from a big search engine were flooding him with questions, and Pavel was looking for solutions that would not involve actually blocking the search engine. We suggested adding a delay in answering queries, since this frequently shuts up 'back to back' generators of questions.

But then we actually had to do it. PowerDNS has a nice office these days, and it takes me 30 minutes of cycling to get there, and I find those 30 minutes are well suited to speccing out interesting solutions to problems.

What I came up with has now been implemented. It does have downsides, but it is remarkably simple and handles millions of delayed events per second with ease.

First, we employ a trick I documented back in 2007, namely using a pipe within the same process to transmit pointers. This gives you 'free' communication between threads, and since you pass pointers over the pipe, there is no need to serialize anything. We use the pipe to send the a tuple containing the event & when it needs to be executed.

The nice thing too is that pipes guarantee that small writes are atomic. So we can have as many threads as we want put events in the pipe, without any locking on our side, and they will arrive at the worker thread uninterleaved. And no locking (in our code at least) is always a win!

As an aside, we've been using the pipe trick since 2007 or so, and it has never shown up in any benchmarks (except in a positive fashion). However, people frequently associate pipes with "slow text based command line monstrosities", and assume pipes must be slow. In fact, pipes are core operating system infrastructure, and they are blazing fast.

Getting back to our 'DelayPipe', the worker thread reads pointers from the pipe, and puts them in a time ordered container. Subsequently, there is the question of how to 'wait until the first event that needs to happen'. And again, we can reuse some POSIX semantics, as follows.

We don't immediately read from the pipe, but we call poll() on it first with a timeout, and that timeout is equal to the amount of time we must wait until the first event needs to be executed. And if there is no work already queued, we wait infinitely long. For safety, we also check if there is actual work in the queue that is overdue for happening and in that case we don't read from the pipe at all. Once poll() either tells us we have a new event, or a timeout happened, we execute all events that have reached their appointed moment.

With this simple setup, we get a solution that is thread-safe, since many threads can put things in the pipe simultaneously. We do have only one executor of delayed events, which might be unacceptable if the execution of events is actually slow. However, we could easily spawn more of these threads, and give them their own pipe.

A potential downside is that this setup malloc()s stuff in a producer thread and free()s them in the worker thread, something that is known to be a heavily locked and potentially slow operation in many mallocs. Benchmarking on recent platforms however has not shown any appreciable overhead because of this.

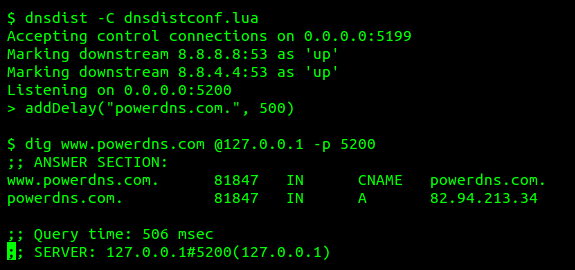

Finally, after only a 30 minute trip on my bike, and half a day of coding, we were able to get this up and running:

The actual code can be found here and here. The testcase shows how it works in practice. I hope this has been useful for you!

PS: before you cry "you don't know how deep the pipe is!", please do realize we don't actually use the pipe for queueing, we have separate container for that. The pipe is only a nice way to get easy producer/consumer communications between threads with sane semantics.

But what if you have a user facing 1000 DNS queries per second, wanting to delay answering all of them by a few hundred milliseconds? We can't spawn 1000 threads per second and just run nanosleep() on them!

This problem is all the more vexing because many end-users find it very easy to say the words 'just delay the answers a bit!', without realizing that this is in fact not an easy thing to do at high concurrency.

This week, we saw Pavel Odintsov run into this exact issue, nameservers from a big search engine were flooding him with questions, and Pavel was looking for solutions that would not involve actually blocking the search engine. We suggested adding a delay in answering queries, since this frequently shuts up 'back to back' generators of questions.

But then we actually had to do it. PowerDNS has a nice office these days, and it takes me 30 minutes of cycling to get there, and I find those 30 minutes are well suited to speccing out interesting solutions to problems.

What I came up with has now been implemented. It does have downsides, but it is remarkably simple and handles millions of delayed events per second with ease.

First, we employ a trick I documented back in 2007, namely using a pipe within the same process to transmit pointers. This gives you 'free' communication between threads, and since you pass pointers over the pipe, there is no need to serialize anything. We use the pipe to send the a tuple containing the event & when it needs to be executed.

The nice thing too is that pipes guarantee that small writes are atomic. So we can have as many threads as we want put events in the pipe, without any locking on our side, and they will arrive at the worker thread uninterleaved. And no locking (in our code at least) is always a win!

As an aside, we've been using the pipe trick since 2007 or so, and it has never shown up in any benchmarks (except in a positive fashion). However, people frequently associate pipes with "slow text based command line monstrosities", and assume pipes must be slow. In fact, pipes are core operating system infrastructure, and they are blazing fast.

We don't immediately read from the pipe, but we call poll() on it first with a timeout, and that timeout is equal to the amount of time we must wait until the first event needs to be executed. And if there is no work already queued, we wait infinitely long. For safety, we also check if there is actual work in the queue that is overdue for happening and in that case we don't read from the pipe at all. Once poll() either tells us we have a new event, or a timeout happened, we execute all events that have reached their appointed moment.

With this simple setup, we get a solution that is thread-safe, since many threads can put things in the pipe simultaneously. We do have only one executor of delayed events, which might be unacceptable if the execution of events is actually slow. However, we could easily spawn more of these threads, and give them their own pipe.

A potential downside is that this setup malloc()s stuff in a producer thread and free()s them in the worker thread, something that is known to be a heavily locked and potentially slow operation in many mallocs. Benchmarking on recent platforms however has not shown any appreciable overhead because of this.

Finally, after only a 30 minute trip on my bike, and half a day of coding, we were able to get this up and running:

The actual code can be found here and here. The testcase shows how it works in practice. I hope this has been useful for you!

PS: before you cry "you don't know how deep the pipe is!", please do realize we don't actually use the pipe for queueing, we have separate container for that. The pipe is only a nice way to get easy producer/consumer communications between threads with sane semantics.

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.